Not every enrollment slowdown is a site problem. Sometimes it's a portfolio problem. Here's how to tell the difference and what to do about it.

Here's a scenario that may be more familiar than sponsors like to admit.

You're four months into recruitment. A few sites are quiet. Your CRA checks in, reports back that things are moving. The monthly update looks passable.

Then, buried in a feasibility review for an adjacent program, someone notices: three of your underperforming sites opened competing studies in the same indication (!!).

Same patient population. Overlapping eligibility criteria. Two of them opened within the last six weeks.

Nobody flagged it. Nobody had a process to flag it. And by the time it surfaces in the data, you've (potentially) lost six to eight weeks of enrollment you can't get back.

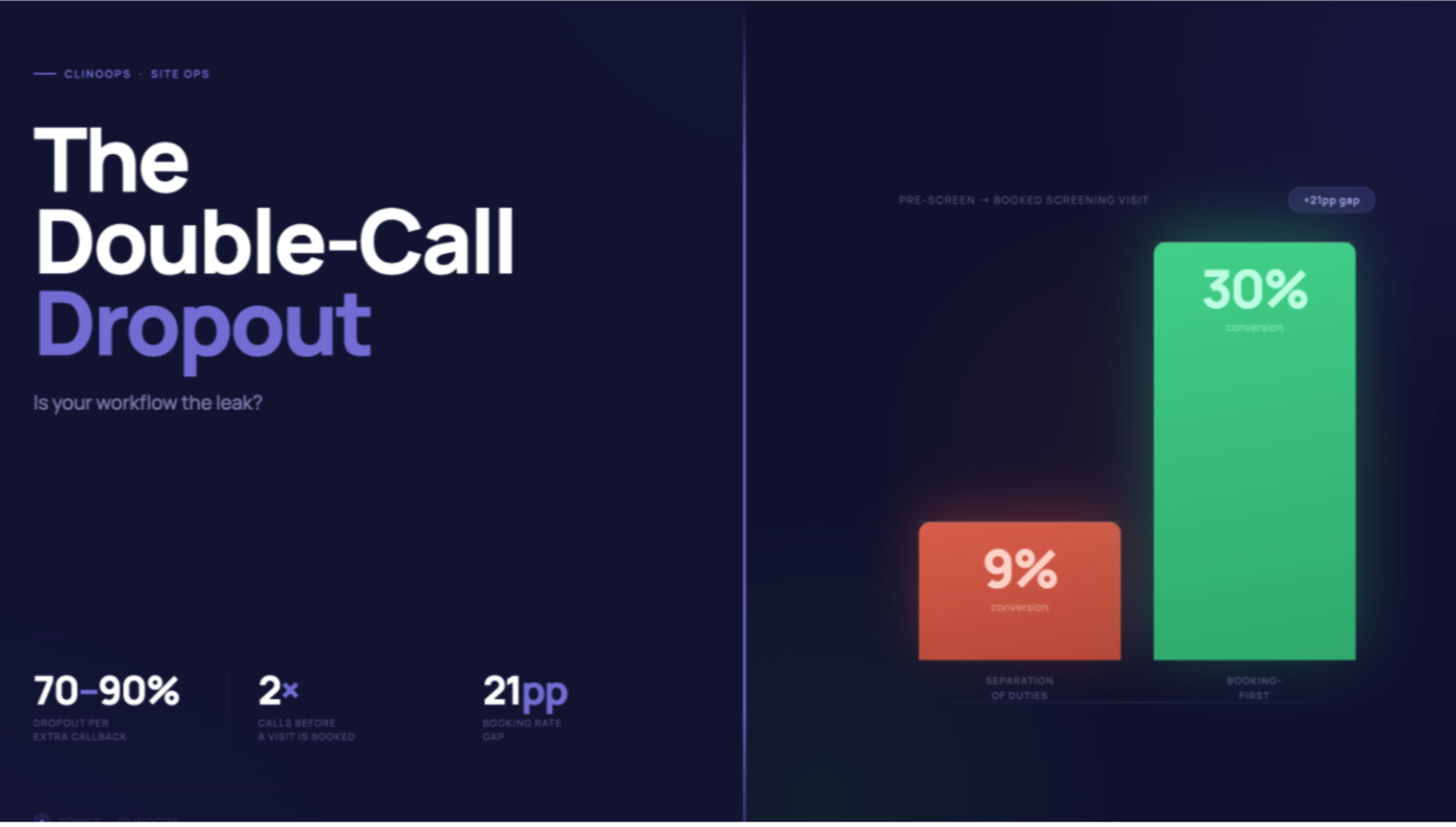

This tends to get misattributed. The site looks disengaged. The PI seems less responsive. Sponsors reach for the usual explanations: phone screening issues, the month-3 plateau. Those are real. But sometimes the simpler answer is that your site opened a study that's easier, better compensated, or less burdensome than yours... and your trial is now competing for the same coordinator hours, the same PI attention, and the same patient pool.

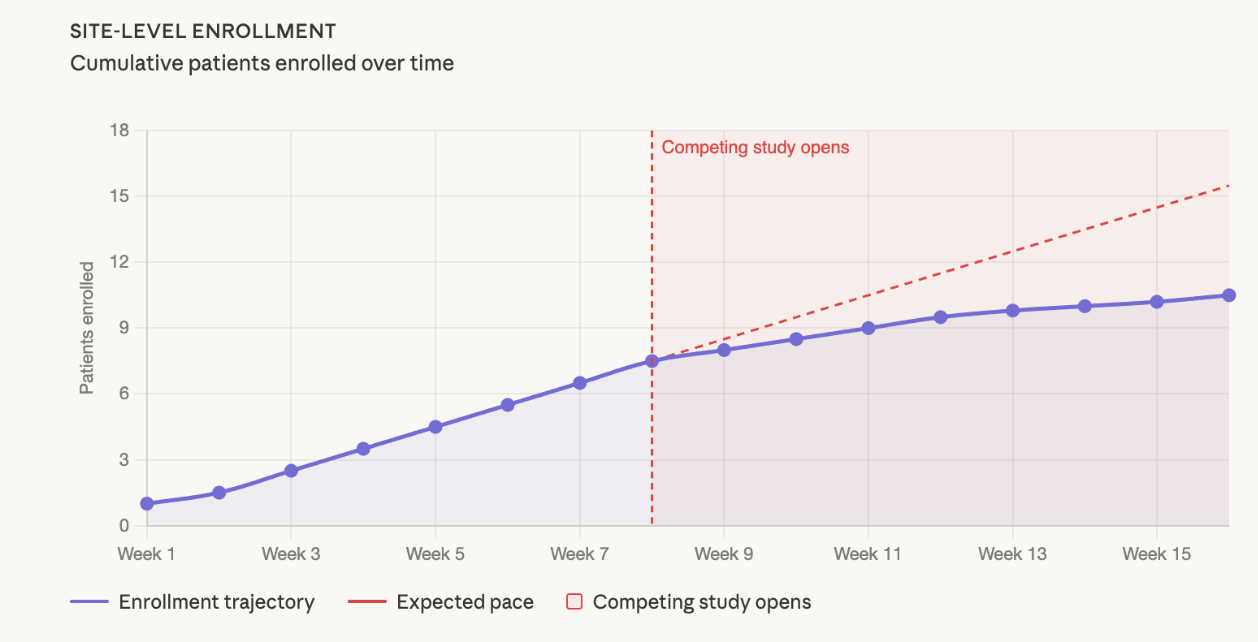

What it actually looks like

In CNS (Power supports patient recruitment across CNS, I&I and neuropsychiatry, including ~25% of active MDD studies in the US), where the eligible patient population at any given site is already constrained, a competing study in the same indication can materially dilute enrollment within weeks. The signal is subtle at first. Fewer pre-screens logged, visits spaced further apart, callbacks taking longer without a clear explanation. By the time it registers in a monthly dashboard, the site may have quietly directed a cohort's worth of patients elsewhere.

Steve Brannan described a version of this from his Karuna days (see our past discussion with him here). When one site began enrolling three consecutive patients over age 55, well above the trial's median age range, he called the PI directly. Turns out the site was funneling younger patients into a competitor's trial with an upper age cap. Karuna was getting the overflow. "The next three patients they did were all under the age of 55."

The point isn't that the PI was acting in bad faith. It's that competing studies can reshape site behavior in ways standard monitoring won't catch. You have to be close enough to the data to notice.

The question sponsors don't always ask at feasibility

Most feasibility surveys ask whether competing studies exist. But even when they do, you'd be surprised how often the follow-up doesn't go deep enough:

- what's their stipend relative to yours

- what's their visit burden

- how long is their enrollment window?

If your budget per patient is too low relative to competing studies, sites will often deprioritize your trial. The reality is sites are running businesses (see: Sites Are Quietly Quitting Your Studies). A coordinator spending four hours (as an example) pre-screening a patient for a study that reimburses less and asks for more will, under real bandwidth pressure, prioritize accordingly. That's not a character flaw. That's resource allocation under pressure.

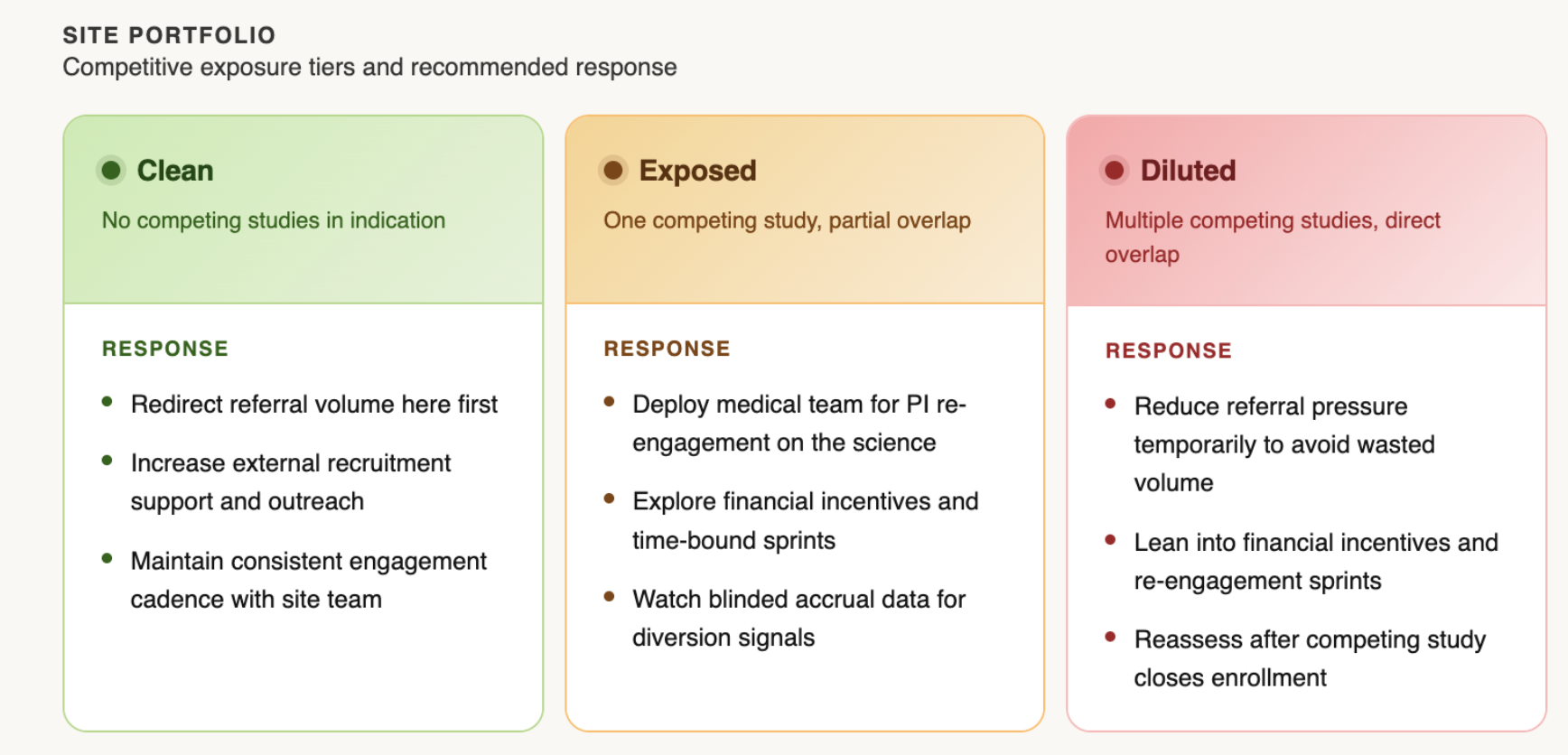

Three responses worth considering

Lean into your clean sites. When competition is concentrated at a subset of sites, redirecting referral volume toward sites with no competitive overlap can offset the drag. This requires visibility into which sites are which (Why Every Trial Needs a Heat Map). Giving an overloaded site a temporary reduction in referral pressure isn't abandonment, it's recognizing that pushing more volume into a diluted environment tends to produce diminishing returns.

Engage the competition, carefully. When a site is stretched across multiple studies, leaning into new financial incentives and time-bound sprints can help keep your trial top of mind. The specifics matter: poorly structured arrangements can create unintended pressure on data quality, so loop in legal, contracts, and your CRO before acting. Done right, this is less about outbidding a competitor and more about signaling that you're paying attention.

Send in your medical team. Monitoring visits serve an important purpose, but they're not designed to re-engage a PI's clinical motivation. A sponsor-side medical director or MSL making a peer-level call on the science often moves the needle in ways a site visit cannot. The conversation doesn't need to be about enrollment targets. It lands better when it's about the science: what the program is trying to show, why this patient population matters, what this site's contribution means to the data. Brannan's approach at Karuna was simple: "Talk to me anytime, day or night." PIs who feel genuinely invested in a program find time for it.

What tends to make it worse

Adding sites is the natural reflex. But a new site takes roughly three months (and oftentimes even longer) from activation to first enrolled patient, and its early numbers will likely come from the same "known patient" pool that drives Month 1–2 performance everywhere. It doesn't solve the competitive problem. It delays the conversation and adds cost (The ROI Reality Check).

Over-monitoring the struggling site has a similar effect. More CRA visits add burden to a team that's already stretched, and can damage the sponsor-site relationship at exactly the moment you need it to be collaborative.

The honest reality

Competitive intelligence in trials is genuinely hard to maintain in real time. What separates programs that manage this well usually isn't a better monitoring system... it's staying close enough to your sites to catch the signal early.

Know which sites are clean, exposed, and diluted. Redirect resources accordingly. And when a site needs re-engaging, think about sending your medical team, not more monitoring visits.

It's something we think about a lot at Power, and we've built frameworks and resources around exactly this kind of challenge. If you're navigating competitive pressure in an active CNS or I&I trial and want to talk through what's working, we're happy to dig in.

Navigating competitive dilution in an active trial? Let's talk.